No embedded latex yet.

First a remark about writing f(x)=-V'(x) and gradient flows:

Gradient flows are used to better understand graphs of complicated functions.

In Machine learning they are solved with Euler's method for the 'loss' function.

Next a remark about the simple examples of the form ẋ=f(x,r):

it's always instructive to take a few special choices of r and practice your skills for ẋ=f(x).

Practice the geometric approach as well as implicit solution formula F(x)=t+C.

F must be well a defined anti-derivative of 1/f(x) on the intervals you consider!

Geometric approach for different r-values in bifurcation diagram with equilibria for each r.

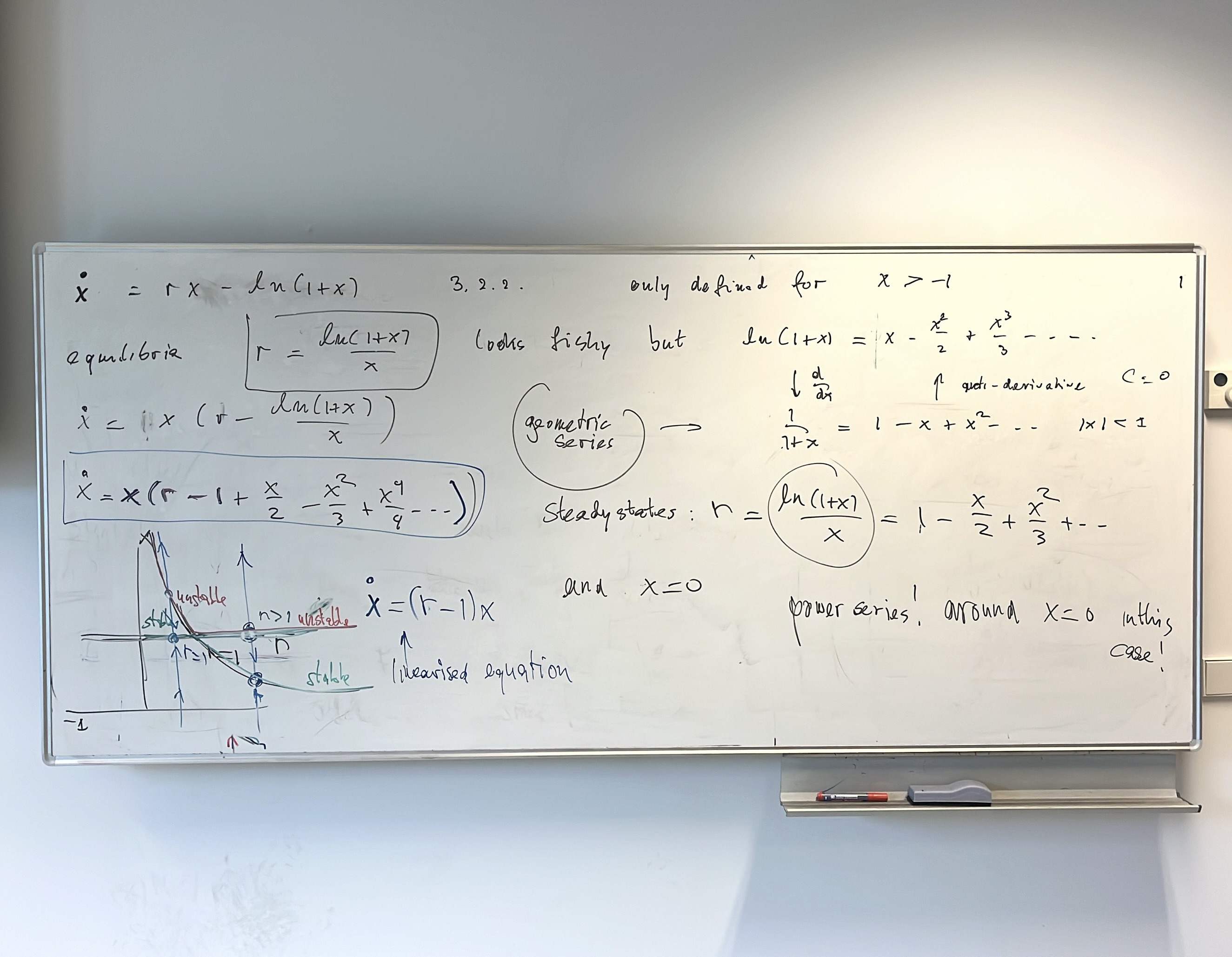

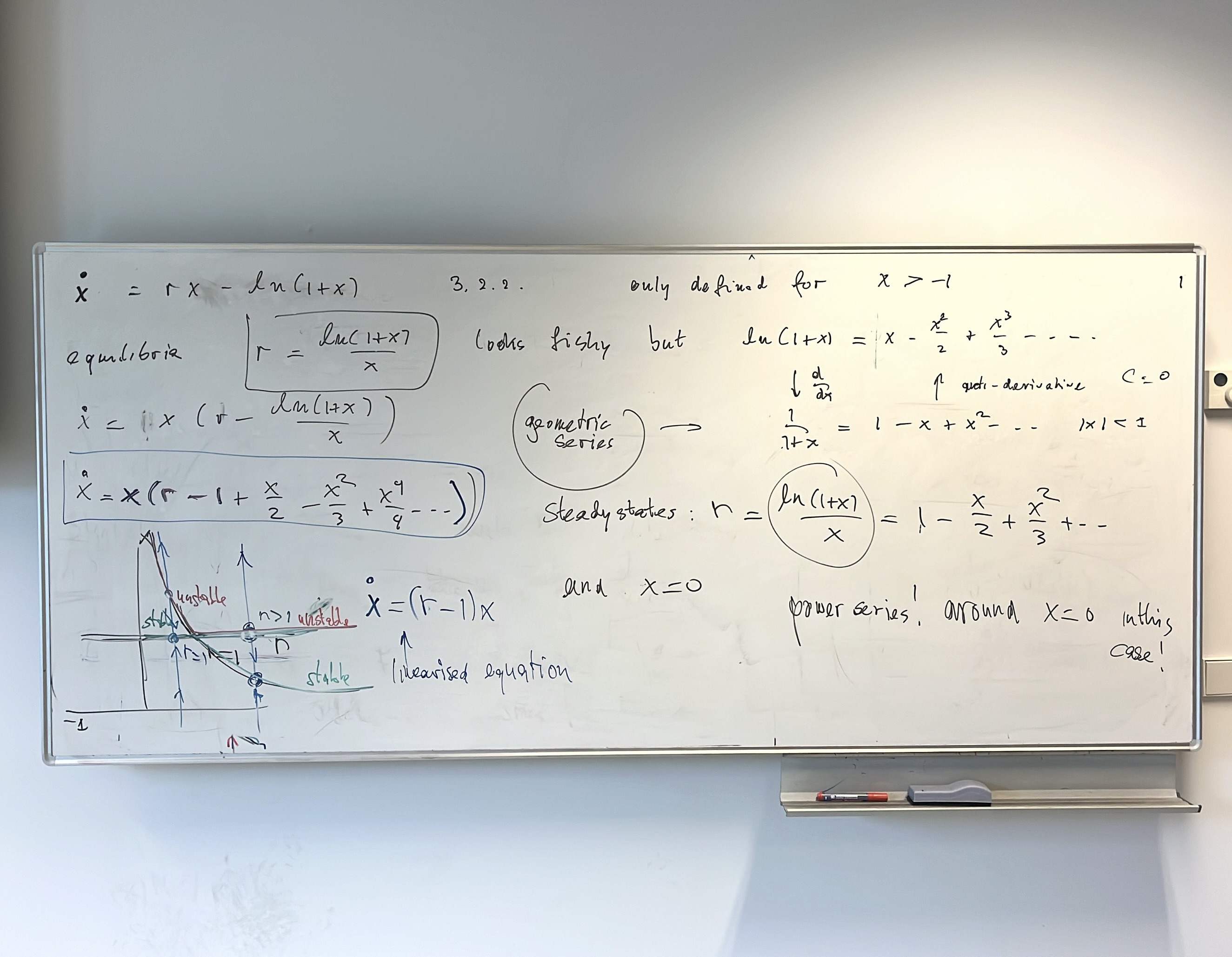

Next an example from which we learn something.

Solving f(x,r)=0 we may miss something, in this case the (trivial) solution x=0.

Note this example is of the form ẋ=rx-f(x) with f(0)=0.

The power series of f(x) around x=0 tells you (not) everything (but a lot).

Another nice example: ẋ=r-cos(x).